There is a fraud type that walks into your branch looking completely normal.

Clean application. Plausible income. Real-looking documents. A photograph attached, as required. The loan officer checks the form, verifies the ID, and sees nothing unusual. Because there is nothing unusual, at least, nothing visible from the single application sitting on the desk.

What is not visible: the same person submitted a nearly identical application at three other branches last week. Different name each time. Same face every time.

This is identity reuse fraud. And according to NASSCOM-DSCI research, fake identities, including those built around reused photographs, have increased by 450% since 2022 in India. It is not a rare edge case. It is one of the most systematically exploited vulnerabilities in loan origination today.

Key Takeaways

- Identity reuse fraud is invisible to manual review by design: a loan officer can only compare a face against their own memory; they cannot compare it against thousands of prior applications from branches they have never visited.

- The same photograph submitted under different name variants is the most common form: R. Kumar, Ramesh Kumar, R.K. Sharma are different enough on paper to avoid flag, identical enough to a photo matching engine to be caught instantly.

- The rejected application repository is where most identity reuse fraud is caught: fraudsters rejected once almost always try again; without a searchable photo history, that rejection means nothing.

- Face embeddings, not photographs, are what AI systems compare: a mathematical vector representation of facial geometry that works across different lighting, angles, partial obscuration, and photo filters.

- A well-configured system returns a match result in under 200ms: across a repository of 10 million-plus records, faster than a loan officer can open the next document.

- The combination of photo matching and OCR field cross-check is what catches sophisticated fraudsters: those who change their photograph but reuse a mobile number, PAN, or address are caught by the document engine even when the face match is inconclusive.

- Detection must happen at origination, not post-disbursement: a fraud flag raised after the loan is sanctioned is forensics, not prevention.

How Identity Reuse Fraud Actually Works

The mechanics are straightforward, which is part of why the fraud is so persistent.

A person, or an organised operation using recruited individuals, submits loan applications with the intention of obtaining credit they either cannot qualify for legitimately or have no intention of repaying. To avoid being identified across applications, they vary the identity details: different name spellings, different addresses, different mobile numbers, sometimes different PAN cards (fraudulent or others’ documents).

What they cannot easily change is the photograph. A KYC photograph is required. It has to look like the applicant. And it has to be consistent enough with the identity document photographs to pass a manual check.

So the photograph stays the same. The paperwork changes.

In a branch-based lending environment without cross-branch photo matching, this works with uncomfortable reliability. Consider how the fraud plays out:

Application 1: Submitted at Branch A under Name Variant 1. Income inflated modestly. PAN card, real or forged. The loan officer sees a first-time applicant. No prior history at this branch. Application proceeds.

Application 2: Submitted at Branch B, or through a DSA channel, or via a mobile app, under Name Variant 2. Slightly different address. Different mobile number. Same photograph. To Branch B’s system, which has no visibility into Branch A’s applications, this is also a first-time applicant.

Application 3 and 4: Same pattern. Different channels. Different name variants. Same face.

If even two of these succeed, and in a system without photo matching, there is no structural reason they should not, the fraudster has obtained multiple loans with no intention of repaying any of them. The NPA is discovered weeks or months later, by which point recovery is difficult and the operational loss is crystallised.

The fraud works because it exploits the fundamental constraint of manual review: a human being can only compare what is in front of them against what they can personally remember. They cannot compare a face against thousands of rejected applications from other branches. They were never designed to.

Why Name Variants Make This Harder to Catch Without Photo Matching

A critical nuance worth understanding: Indian names are structurally more susceptible to variant-based identity fraud than many other naming conventions.

A person named Ramesh Kumar can legitimately appear as Ramesh Kumar, R. Kumar, R.K., Ramesh K., or even with father’s name variants and regional script transliterations. These are all the same person. But in a text-based database search, they may not match, because the string “R.K. Sharma” does not match “Ramesh Kumar Sharma” without fuzzy matching logic applied.

Fraudsters know this. Name variants that look like transcription differences or abbreviation choices are deliberately chosen to avoid simple text-match rejection flags. Each name variant passes individual scrutiny. None of them, individually, looks suspicious.

This is why photo matching is the detection mechanism that matters here, not name matching, not PAN matching alone, not address verification alone. The face is the constant. The name is the variable the fraudster controls.

A face matching engine that compares facial embeddings, the mathematical representation of facial geometry, is indifferent to what name appears on the form. It compares faces, not names. And if the same face has appeared before, it finds it.

The Technology Behind Photo Matching: How It Actually Works

This is where most explanations either go too technical or too vague. Let’s be precise without being impenetrable.

Step 1: Face Detection and Extraction

When a loan application is submitted, the photograph is passed to a deep learning model. The model does two things: it detects the face within the photograph (handling partial frames, varied backgrounds, different photo formats), and it extracts a facial embedding, a numerical vector, typically 512 dimensions of float32 values, that represents the geometric relationships between key facial features.

This embedding is not the photograph. It is a mathematical description of the face derived from the photograph. Raw photographs are not stored in the search index, only the embeddings. This is important both for privacy compliance and for system performance.

Step 2: The Vector Database

The embeddings of every prior application photograph are stored in a vector database, a specialised database designed specifically for similarity search across high-dimensional numerical vectors. Technologies like FAISS, Pinecone, and Milvus are built for exactly this: finding the vectors most similar to a query vector, quickly, across very large datasets.

The index type used for production-scale loan fraud detection, IVF-PQ (Inverted File Index with Product Quantisation), is specifically designed for sub-second recall across datasets of tens of millions of records. It trades a small amount of recall completeness for a very large gain in search speed.

Step 3: The Similarity Search

The embedding extracted from the new application photograph is submitted as a query to the vector database. The database returns the most similar embeddings from its index, the stored faces that are geometrically closest to the query face, along with a similarity score for each match.

A match above the configured confidence threshold triggers a flag. Below the threshold, no flag. The threshold is configurable based on the institution’s risk appetite, higher threshold means fewer false positives but potentially more missed matches; lower threshold means more matches flagged but more analyst review required.

The entire process, face detection, embedding extraction, vector database search, result return, completes in under 200 milliseconds across a repository of 10 million or more records.

Step 4: What the Analyst Sees

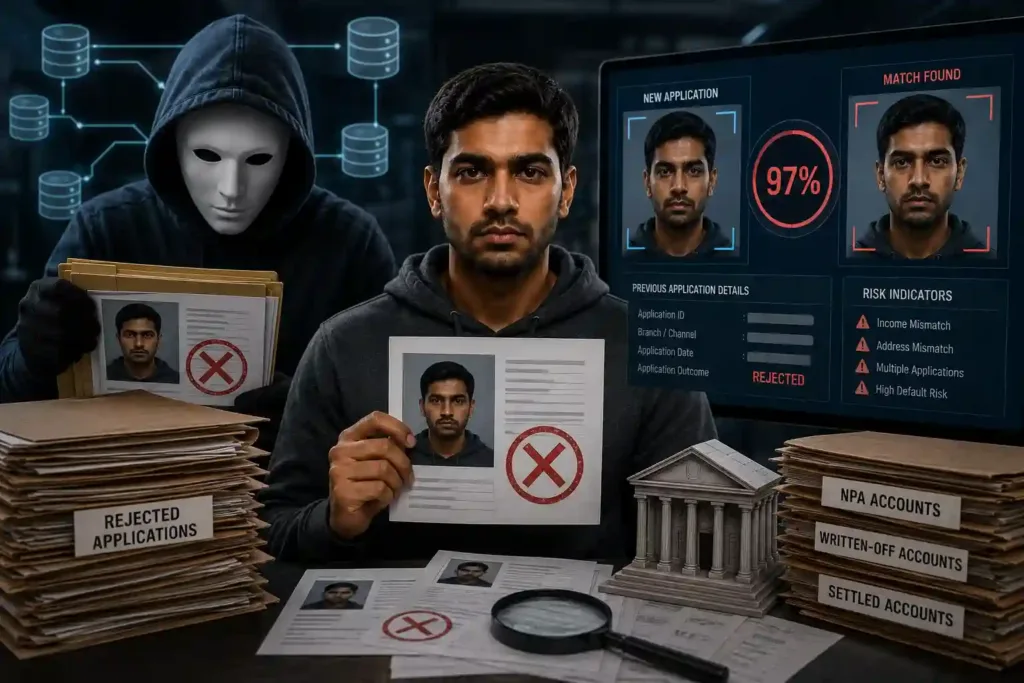

The output presented to the reviewing analyst is not a raw similarity score. It is a structured case: the new application photograph side by side with the matched photograph from the historical repository, the similarity score, the identity details of both applications, the date and branch or channel of the prior application, and the outcome of that prior application, rejected, approved, NPA, written-off.

If the prior application was rejected for fraud indicators, that context is immediately visible. The analyst can see not just that there is a face match, but what happened the last time this face appeared.

Why the Rejected Application Repository is Your Most Valuable Fraud Asset

Most lending institutions think of their rejected application data as a compliance archive, records retained because they have to be, not because they are operationally useful.

This is exactly backwards.

The rejected application repository is the single most valuable fraud detection asset a lender holds, because fraudsters who are caught and rejected almost always try again. The same face that was rejected at Branch A for income inflation will show up at Branch B, at a DSA partner, through the mobile app. The face does not change. The attempt does not stop.

Every rejected application photograph that is not in the searchable photo repository is a gap, a face that, if it reappears, will not be recognised.

The same logic applies to NPA accounts, written-off loans, and settled loans. A borrower who defaulted and was written off is a known defaulter. If they reappear under a different name, that connection should be surfaced immediately at the point of the new application, not discovered later during recovery proceedings.

The architecture that makes this work: the photo repository must be updated in real time. The moment an application is rejected, that photograph and its associated fields must be immediately searchable against the next incoming application. A repository updated weekly or monthly is not a fraud prevention tool, it is a historical record.

What Sophisticated Identity Reuse Fraudsters Do Differently

Experienced fraudsters, particularly those operating loan rings rather than individual fraud, know that photo matching exists at some institutions. They attempt countermeasures.

Different photographs submitted across applications

Different lighting, different angle, cap or glasses added, photograph taken at a different point in time. A naive pixel-level comparison system might miss these variations. A face embedding system does not, because it captures the underlying facial geometry, not the surface appearance of the photograph. Partial obscuration, photo filters, different lighting, and different angles all produce embeddings that are still close enough to trigger a match above threshold.

Photograph manipulation

Some sophisticated fraud attempts involve digitally altering photographs to change specific facial features, typically minor alterations that are intended to reduce the similarity score below detection threshold. The effectiveness of this depends on the quality of the face recognition model. Models trained specifically on diverse Indian face data, with adversarial examples in training, are substantially more robust to these manipulations than general-purpose models.

When photo matching is inconclusive, the OCR layer catches what the face engine misses

This is the most important structural point about the detection architecture: it operates across two independent engines simultaneously. A fraudster who successfully changes their photograph enough to reduce face match confidence below threshold still needs to submit real-looking documents. Those documents contain fields, mobile numbers, PAN numbers, addresses, employer IDs, that can be cross-referenced against the historical field index.

A person who changed their photograph but reused their actual mobile number, because they need to receive loan disbursement communications, will be caught by the OCR cross-check even if the face match is inconclusive. The two engines create overlapping detection nets: a fraudster who slips through one faces the other.

The Scale Problem: Why This Cannot Be Solved Manually

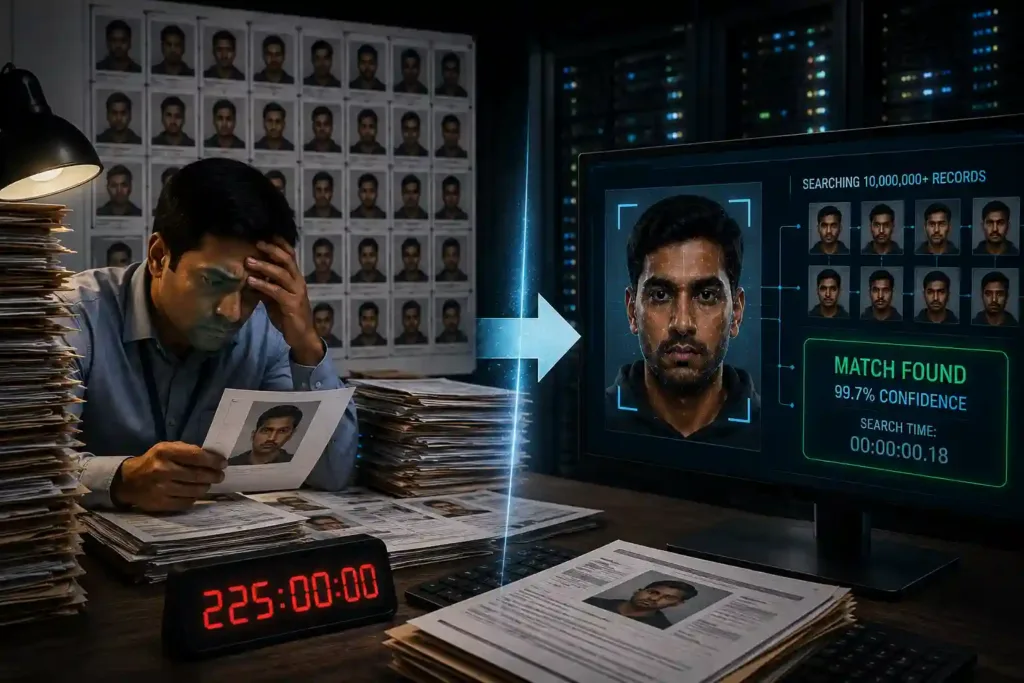

It is worth being explicit about the numbers, because the scale of the problem is what makes manual review categorically, not just practically, insufficient.

A mid-sized NBFC processing 300 applications per day accumulates roughly 90,000 applications per year. Over three years of operation, that is 270,000 photographs in the historical repository.

For a manual reviewer to compare a new application photograph against 270,000 historical photographs would require reviewing each one. At even three seconds per comparison, an optimistic estimate for a meaningful visual comparison, that is 810,000 seconds per application. 225 hours. For one application.

The mathematics of manual review make cross-application photo matching impossible at any scale above a handful of applications per month. This is not a criticism of loan officers, it is a statement about the limits of human visual memory and processing speed applied to a task that requires searching millions of records.

A face embedding search across a vector database of 10 million records takes under 200 milliseconds. The same task that is humanly impossible at scale takes less time than a loan officer takes to open the next document.

This is not AI assisting human review. It is AI performing a task that human review is structurally incapable of performing at the required scale and speed.

Frequently Asked Questions

1. What is identity reuse fraud in loan applications?

Identity reuse fraud is a loan fraud typology where the same person submits applications under different names, typically name variants or abbreviations, across multiple branches, channels, or lenders, using the same or similar photograph each time. Because each application appears to be from a first-time applicant at the branch or institution receiving it, manual review does not detect the duplication. AI-powered photo matching detects it by comparing facial embeddings rather than identity text fields, making it indifferent to name variations.

2. How does face matching detect duplicate identity fraud?

Face matching extracts a mathematical representation of the applicant’s facial geometry, called a face embedding, from the submitted photograph and compares it against the embeddings of all prior application photographs stored in a vector database. If a stored embedding is sufficiently similar to the new one, above a configured confidence threshold, a match is flagged. The system works across different lighting conditions, angles, partial obscuration, and photo filters because it captures underlying facial geometry rather than surface photographic appearance.

3. What is a face embedding and how is it different from a photograph?

A face embedding is a numerical vector, typically 512 dimensions, that represents the geometric relationships between key facial features extracted from a photograph. It is not the photograph itself. The embedding captures the mathematical structure of the face in a form that can be compared against millions of other embeddings in milliseconds. This is what enables sub-200ms search across repositories of 10 million-plus records, and it also means raw photographs do not need to be stored in the search index, which has privacy compliance advantages.

4. Why can’t manual review catch identity reuse fraud?

Manual review works on one application at a time and is limited to what the reviewing officer can compare from memory. A mid-sized NBFC accumulates hundreds of thousands of historical application photographs over several years. Comparing a new application photograph against that entire historical repository manually, at a meaningful level of visual scrutiny, would require hundreds of hours per application. It is not a question of insufficient effort; it is a mathematical impossibility at operational scale. AI face matching does this comparison in under 200 milliseconds.

5. What happens when a fraudster uses different photographs across applications?

Face embedding models capture facial geometry rather than photographic appearance, making them robust to variations in lighting, angle, partial obscuration, and photo filters. Minor digital alterations intended to reduce similarity scores are addressed through models trained on adversarial examples. Additionally, even when face match confidence is below threshold, the OCR cross-check engine simultaneously verifies document fields, a fraudster who changes their photograph but reuses a mobile number, PAN, or address will still be flagged by the document intelligence layer.

6. What data sources should be included in the photo repository?

The photo repository should include rejected applications (the highest-value source, fraudsters who are rejected almost always try again), NPA and written-off loan accounts, active loan files, credit card applications, and account opening KYC photographs. The repository must be updated in real time, the moment an application is rejected, that photograph should be immediately searchable against incoming applications. A repository refreshed weekly or monthly is not adequate for real-time origination fraud detection.

7. How quickly should a photo matching alert be generated?

Detection must occur before the loan sanction decision, which in digital lending environments can happen within minutes of application submission. A production-grade face matching system running against a repository of 10 million-plus records should return a match result and composite risk score within seconds of application receipt. Detection that takes hours is retrospective analysis, not origination fraud prevention.