Somewhere between the first AI pilot and the fifteenth failed rollout, most organizations reach the same uncomfortable conclusion: AI is not a technology problem. It is an organizational one.

88% of organizations now use AI in at least one business function, up from 55% just 2 year ago, according to McKinsey’s 2025 survey. Yet despite this surge in adoption, the AI project failure rate sits between 70 and 85%. The gap is not capability. It’s coordination, governance, and strategy, which is precisely where an AI Center of Excellence comes in.

What is an AI Center of Excellence?

An AI Center of Excellence (AI CoE) is a dedicated internal unit within an organization whose purpose is to centralize AI expertise, standardize its deployment, and govern its use across every function.

Think of it this way: without an AI CoE, every team experiments independently, buys overlapping tools, and solves the same problems in parallel. Inconsistent AI practices across teams make scaling difficult. Limited visibility means leadership cannot track progress or impact. And as AI influences core decisions, the cost of failure increases.

An AI CoE solves for all three by creating a single, accountable source of AI strategy, standards, and scale.

It consists of a cross-functional team that plans, builds, and scales AI-based technologies, while setting strategy, implementing guidelines, and helping the organization comply with regulations and mitigate risks.

Why Organizations Are Building AI CoEs Right Now

Even back in 2024, Gartner believed that more than 75% of enterprises will reduce their focus on experimenting with AI and move towards operationalizing it. That operationalization requires a structured framework, and organizations without one are finding that AI delivers noise, not results.

Research found that 26% of organizations had introduced several GenAI-enhanced applications into production, up from 17% just months prior. The pace of deployment is accelerating, but so are the risks of doing it wrong.

Two primary drivers are pushing enterprises toward AI CoEs: governance and costs. As organizations pursue AI, everyone will inevitably face data privacy and data sovereignty challenges, and a lack of centralized governance makes overcoming those challenges essentially impossible.

The Standard AI CoE vs. The High-Stakes AI CoE

Here’s where the real conversation begins. The typical enterprise AI CoE is built to optimize: reduce costs, improve customer experience, automate workflows. These are legitimate goals. But they assume a relatively forgiving environment, one where a miscalibrated model means a bad recommendation, not a compromised investigation.

Organizations in law enforcement, intelligence, financial intelligence, and BFSI operate in a fundamentally different context. When their AI gets it wrong:

- A criminal network stays hidden.

- A financial crime goes undetected.

- Sensitive citizen data is exposed.

- An operation is compromised.

- An AI CoE for these organizations must be built on entirely different principles.

Four Pillars of a High-Stakes AI CoE

1. On-Premiseor Air-Gapped Infrastructure

For most enterprises, cloud AI is an efficiency question. For security-sensitive organizations, it is a non-starter. Organizations need a centralized data hub and often maintain at least one copy of their data on private infrastructure, while keeping a second in a cloud environment, a strategy that also helps avoid vendor lock-in.

A high-stakes AI CoE must operate on data infrastructure it fully controls, whether on-premise, sovereign cloud, or air-gapped deployment.

2. Multimodal Intelligence, Not Just Language Models

Text-based AI is only part of the picture. Agencies dealing with real-world threats need AI that understands images, video, audio, and call records simultaneously. A robust AI CoE in this space must integrate computer vision, speech analytics, OSINT processing, and structured data analysis, all under one governance umbrella.

3. Explainability and Auditability at Every Layer

A strong governance framework requires transparency about training data and decision reasoning, clear accountability structures, and lifecycle oversight including auditing, drift monitoring, and retraining triggers.

For law enforcement and financial intelligence specifically, unexplainable AI outputs are operationally useless and legally problematic. Every decision an AI system influences must be traceable.

4. A Unified Data Architecture Across Silos

The greatest challenge high-stakes organizations face is not a lack of data, it is the fact that the data sits in incompatible silos. Intelligence gathered by one unit cannot be correlated with evidence held by another. The AI CoE must establish shared data standards and interoperable architectures that allow AI to work across the full intelligence picture.

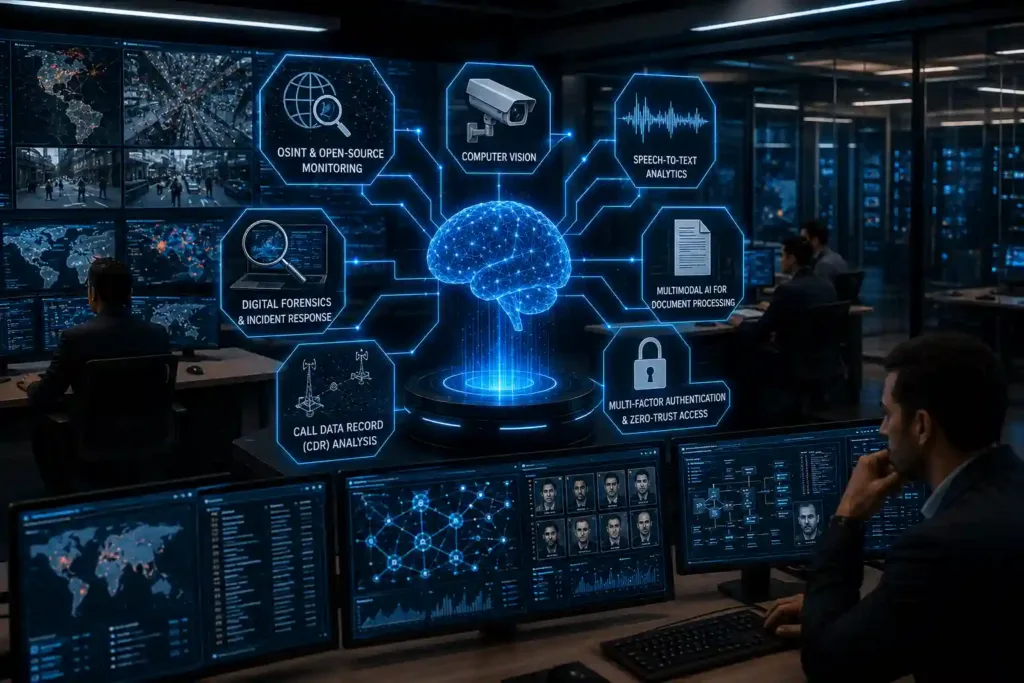

What Technology Does a High-Stakes AI CoE Actually Need?

The tools that support a high-stakes AI CoE are different from what a commercial enterprise deploys. The technology stack typically includes:

- OSINT and open-source monitoring, continuous collection and analysis from surface web, dark web, and social media, across languages and geographies

- Computer vision, real-time video analytics and facial recognition, deployable at scale without cloud dependency

- Speech-to-text analytics, transcription and analysis of audio from radio, emergency communications, and recorded calls in multiple languages and accents

- Digital forensics and incident response, rapid, remote investigation of compromised endpoints without physical access

- Multimodal AI for document and data processing, OCR, summarization, and cross-format synthesis for high-volume intelligence reports

- Call data record (CDR) analysis, network mapping and movement tracking from communication data

- Multi-factor authentication, zero-trust access controls for the AI infrastructure itself

Each of these is not a standalone tool. In an AI CoE, they work together as a coordinated system, governed by shared standards and accountable to a central oversight function.

The Bottom Line

An AI Center of Excellence is not a technology initiative. It is an organizational capability, one that determines whether AI becomes a force multiplier or an expensive distraction.

For enterprises in standard commercial environments, the CoE conversation is about speed, scale, and ROI. For organizations where decisions affect investigations, national security, or financial integrity, the CoE conversation is about something more fundamental: whether AI can be trusted to operate at the level the mission demands.

Building that trust requires more than a governance charter. It requires the right architecture, the right tools, and a technology partner who understands what is actually at stake.

Frequently Asked Questions

1. What is an AI Center of Excellence?

An AI Center of Excellence (AI CoE) is a dedicated organizational unit that centralizes AI expertise, sets governance standards, manages vendor relationships, and ensures AI initiatives align with business goals and regulatory requirements. It is the structure that helps organizations move from isolated AI experiments to enterprise-wide, scalable AI deployment.

2. What is the difference between an AI CoE and a regular AI team?

A regular AI team builds and deploys models within a specific function. An AI CoE sets the rules, standards, and architecture for how AI is used across the entire organization. It is less about building and more about governing, enabling, and scaling, across every department and use case.

3. Why do law enforcement and financial intelligence agencies need a different type of AI CoE?

Standard enterprise AI CoEs are optimized for efficiency and customer outcomes. For agencies where AI informs investigations, fraud detection, or national security decisions, the stakes of an error are categorically higher. A high-stakes AI CoE prioritizes on-premise infrastructure, explainability, auditability, and multi-source intelligence integration, requirements that most commercial AI frameworks do not address.

4. What are the most common reasons AI CoEs fail?

The most common failure points are: inconsistent AI practices across teams, limited leadership visibility into AI impact, and rising risk exposure as AI influences more decisions. In high-stakes environments, there is an additional failure mode: deploying AI tools that were not designed for secure or sovereign data environments.